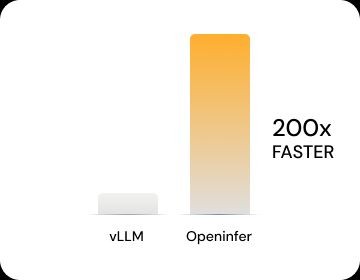

Performance

Proven at the Edge

Turn Distributed Hardware Into One AI System

Instead of requiring specialized infrastructure, OpenInfer uses the resources already present across your systems and coordinates them to run large models locally.

Supports 500+ AI model architectures and even works across unused VMs without rewriting or shrinking models.

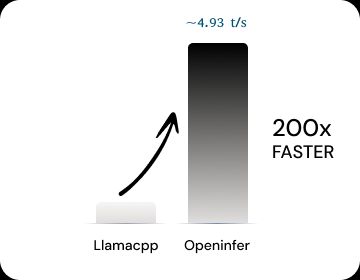

Unlocking Memory footprint

Existing runtimes have major slow down when they need to operate on virtual machines, VMs, with limited memory footprint. In this setup, 4 virtual machines on Amazon AWS were selected. Each VM on Intel Xeon had 8GB of RAM each, 4 cores each across one data center on AWS (ping latency around 700micro seconds). Single batch (small batch size was used). OpenInfer delivered a major unlock compared to competitors, by virtually meshing these VMs and running an 8B Q8 Llama4 at around 4,493 token/sec

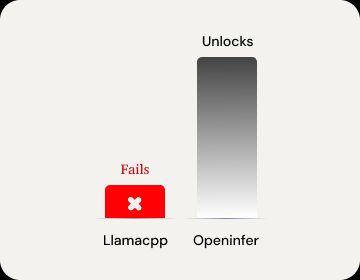

Large Models on fragmented compute

In this experiment an inference experiment with larger model (Llama70B, Q4) and single small batch was done on Intel Xeon virtual machines. The VMs were selected on Amazon AWS, 32 GB of RAM, 16 core each with ping latency of ~700 micro seconds. Existing runtimes failed to operate and OpenInfer continued to utilize available 4 VMs to generate tokens at ~1.3 token / second.

Large Batch sizes

Batching (including prefill), especially when it gets to the heterogeneous systems can become more challenging but are critical to address TCO for the market adoptor. In this experimentation, we compare OpenInfer performance vs another existing infra player. Batch sizes of 20, on 2 separate machines with GPUs were used. OpenInfer delivered 2 time higher throughput compared to existing options.